It’s 2022 and everyone wants to be data-driven..but this isn’t anything new. Companies have struggled to become data-driven for the last few decades(if not longer).

But many of them continually fail.

Some companies are still struggling to accurately count total active customers or report revenue per customer in many cases. Even after investing large amounts into data infrastructure, reporting and staff.

Data projects that may have succeeded at one point tend to fail in the future. This is caused by many reasons. One of those many reasons is that data teams tend to be the middle layer. Data teams tend to simply have to abide by the software and business needs while they toil to make sense of all the crazy and ever-changing business logic.

But this is just one of many reasons data teams and projects fail and or struggle to maintain some semblance of success.

Overcomplicated systems, the exodus of a key person, or lack of alignment all are also culprits of failures for data teams. All in all, data isn’t the new anything if your company's data initiatives fail.

Let’s dig through just a few reasons why your data strategy or project might fail.

Key Person Dependencies

Over the past 12 months, I have taken on 3 different projects that involved companies struggling to piece together their data infrastructure.

Not because anyone was nefarious or purposely destroyed it. Instead, either a single employee or an entire team that had supported their data infrastructure left. In some cases, this can be nearly as bad as a company’s data infrastructure being deleted.

All the knowledge of why a system operates the way it does, the logic for tables developed, and the reasons for creating certain workarounds are all lost.

For many companies, this is like starting over at level one(or nearly so). Whoever they hire in the future will now have to spend time looking through the old infrastructure, talking to vendors, talking to stakeholders, and reading through what little documentation may exist just to get to a good baseline.

At this point, the company has easily:

Spent 30-50k hiring said replacement employee

Spent 35-60k to have them go through this initial review of all the data infrastructure

Spent 10-20k a month on data solutions that are being underutilized

So companies have already spent 100k+ just to get back to normal.

To avoid throwing away nearly 150k just to get back to a baseline. It’s generally better to have some redundancy. Having an extra employee as well as training other employees in similar roles can reduce the impact when an important team member leaves.

Chasing The New Thing

There are a lot of new data tools, best practices and solutions on the market today. Many of these tools can be very helpful and can(in theory) reduce your teams workload. However, if a data team is constantly chasing the new technology, constantly running POCs and never truly delivering results will quickly find themselves looking for new jobs.

No results mean unhappy CFOs, directors and stakeholders.

Unhappy CFOs, directors and stakeholders mean people get fired.

New technology and tools can make a data teams life easier.

But it can also make it harder.

More tools generally equate to more overhead, more costs and distractions. Without a clear mission or end goal teams can tend to rely on implementing new tools and setting up infrastructure for infrastructure sake as the end goal of their work(instead of driving business value).

It’s a constant issue in the data space.

It only takes a few years for a massive shift or trend to take hold and there can be a lot of FOMO. In turn this leads to distracted teams wondering if their current data infrastructure implementation is good enough. Not to mention, if they aren’t driving real value, then they can point to their poor tooling as the issue.

In the end, this can lead to projects that never truly land and instead merely lay unfinished for the next team to take on.

Lack Of Buy-In(Real Buy-In) From Management

Being data-driven sounds nice and it’s common for upper-level management to initially buy into this idea. However, it's important to get real buy-in for your new data initiatives, strategies, and projects. Someone on the business side needs to be held accountable for the success or failure of the project just as much as someone on the tech side.

Data teams are translating a lot of complex business processes and logic into KPIs and various reporting layers which can take time as well as require input from the business team(but more if the data or logic makes sense). All of this is challenging. If there is no buy-in from the business, well, then the project will likely fail. This isn’t even including all the likely discussions that will be required with engineering to get access to databases behind firewalls and set up the correct infrastructure, budgets, and tools in such a way that your data teams can be successful in the long term.

No leadership buy-in means that when your data teams run into issues such as pushback from other teams, they will fail. There isn’t a question about it. Buy in, real buy-in must happen.

Lack Of Communication

Communication is a very broad term, so simply stating that a lack of communication leads to failures, is one, obvious, and two nondescripts.

What I mean by communication is that teams and team leads that don’t provide transparency into what they are trying to do, what is blocking them, and how they are progressing in a project will struggle to be successful. One thing I picked up from Facebook and that I believe is a habit of most successful data teams(including none big tech companies) is a combination of one-pagers to define a project/outline their timeline as well as updates of where a project is at certain times.

Gergely Orosz has a great thread about communication where he outlines just this. You can start reading the tweet below.

Providing regular updates I believe has several benefits. This allows everyone who is interested in the project to be made aware of where the project is tracking, if there are major blockers and so on. In turn, this means that other teams can plan around the timing of said project.

In addition, this forces the individuals leading the project to hold themselves more accountable. If you have nothing to post every week or two. That’s not good. No progress being made in that period means the project is stalled and there are issues that need to be addressed.

Overall this leads to better velocity of work as teams are made aware of the status of projects they might be waiting for.

Lack Of Data Quality And Reliability

Skipping data quality has become easier and easier with low code solutions that allow you to quickly load data into your data storage systems.

Initially there might be no problems when skipping data quality. Perhaps your team puts out a few dashboards and everyone is happy. Until one day, either a new data set is pulled in that is a little less straightforward or just a change in your current data breaks your dashboards.

Suddenly you get a ping from your CFO complaining that the dashboard is wrong. You didn’t notice because you were working on the next dashboard and now you’re in fire fighting mode. This isn’t where anyone wants to be. Not integrating data quality and reliability early will often lead to pain later. Not to mention a break of trust in your data team. The solution here is simple. Incorporate data reliability early.

Avoiding Failure Means Planning

Being data driven is hard because it does take a decent amount of effort up front. Even with all the tools and low-code solutions on the market today. There are more ways to put together solutions that don’t work than do. There are more ways to spend money on solutions instead of create value with data.

In addition, a lack of process and distracting new solutions can keep a data team from truly succeeding.

We live in a world that likes moving quickly. To add to that there has always been a push to get access to data quickly. But without any planning, at least to a baseline degree, your project will likely fail.

Answering baseline questions such as who on the business side will support the project, how does the project align with the company's larger strategy, and what is the timeline for the project increases the likelihood of project failure.

Feel free to ping our team if you need help with your data infrastructure and or initiatives.

Throw Back Video - 5 In Demand Data Jobs And How Much They Make

Rockset Beats ClickHouse and Druid on the Star Schema Benchmark (SSB)

Rockset is 1.67 times faster than ClickHouse and 1.12 times faster than Druid on the Star Schema Benchmark. Rockset executed every query in the SSB suite in 88 milliseconds or less.

What does this mean?:

Real-Time Data Platforms Emerge for Application Serving: The core of the modern data stack is the data platform which until now has been batch but is quickly going real-time. Real-time platforms like Rockset, ClickHouse and Druid are used by developers for serving modern data applications.

Rockset Is the Only “Built for the Cloud” Real-Time Data Platform: Teams have traditionally had to do time-consuming data preparation, cluster tuning and infrastructure management in order to meet the performance requirements of their application when using ClickHouse or Druid. Now, teams can get performance and cloud simplicity with Rockset.

Benchmarks Need to Evolve for Real-Time Analytics: SSB shows the millisecond latency query performance of real-time data systems. But, that’s only one part of the story. The next generation of benchmarks need to measure query performance with real-time ingest.

Very special thank you Rockset for sponsoring the newsletter this week!

Articles Worth Reading

There are 20,000 new articles posted on Medium daily and that’s just Medium! I have spent a lot of time sifting through some of these articles as well as TechCrunch and companies tech blog and wanted to share some of my favorites!

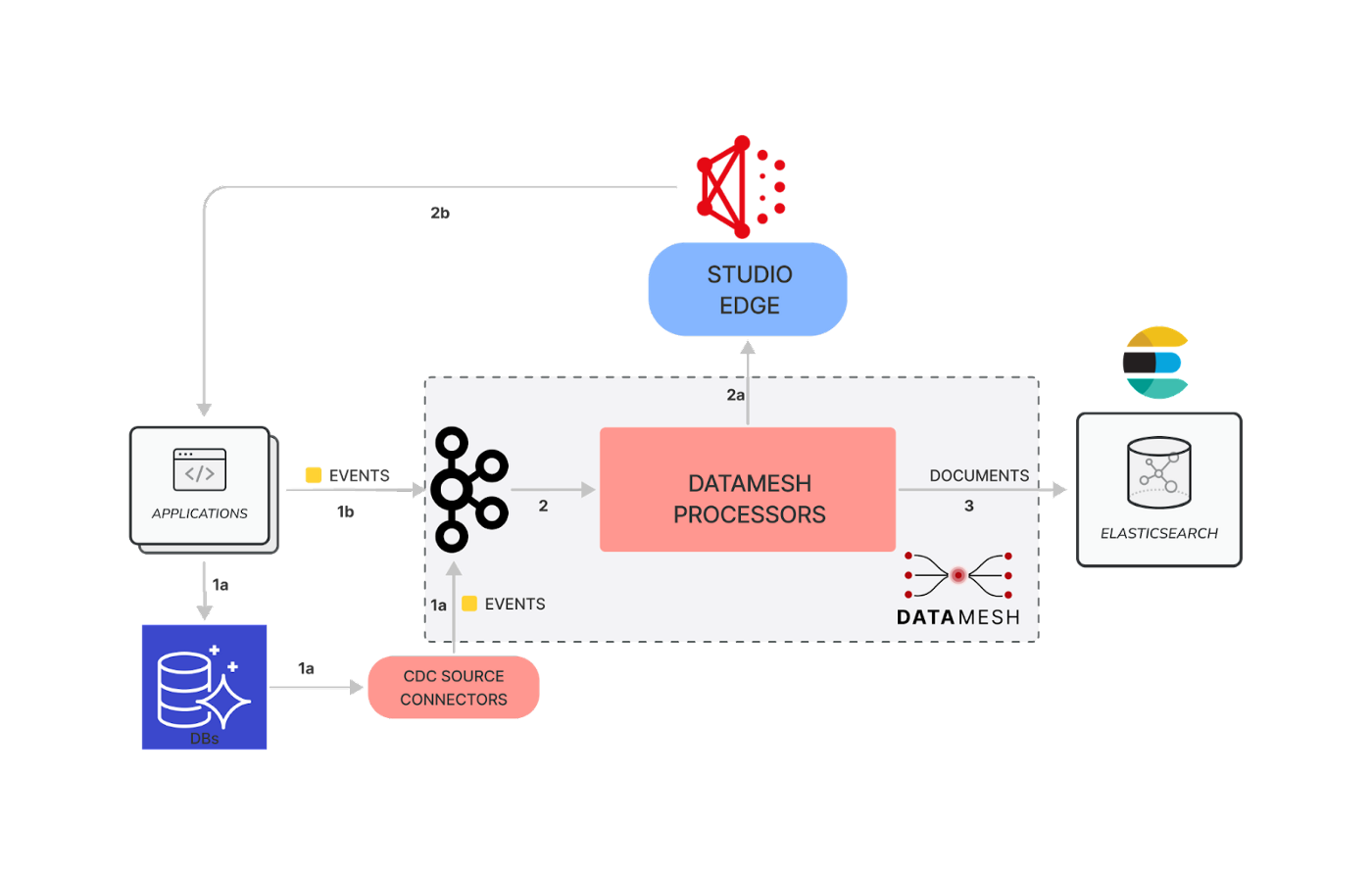

How Netflix Content Engineering makes a federated graph searchable

Over the past few years Content Engineering at Netflix has been transitioning many of its services to use a federated GraphQL platform. GraphQL federation enables domain teams to independently build and operate their own Domain Graph Services (DGS) and, at the same time, connect their domain with other domains in a unified GraphQL schema exposed by a federated gateway.

As an example, let’s examine three core entities of the graph, each owned by separate engineering teams:

Movie: At Netflix, we make titles (shows, films, shorts etc.). For simplicity, let’s assume each title is a Movie object.

Production: Each Movie is associated with a Studio Production. A Production object tracks everything needed to make a Movie including shooting location, vendors, and more.

Talent: the people working on a Movie are the Talent, including actors, directors, and so on.

What Is Trino And Why Is It Great At Processing Big Data

Big data is touted as the solution to many problems. However, the truth is, big data has caused a lot of big problems.

Yes, big data can provide more context and provide insights we have never had before. It also makes queries slow, data expensive to manage, requires a lot of expensive specialists to process it, and just continues to grow.

Overall, data, especially big data, has forced companies to develop better data management tools to ensure data scientists and analysts can work with all their data.

One of these companies was Facebook who decided they needed to develop a new engine to process all of their petabytes effectively. This tool was called Presto which recently broke off into another project called Trino.

Best practices to optimize data access performance from Amazon EMR and AWS Glue to Amazon S3

Customers are increasingly building data lakes to store data at massive scale in the cloud. It’s common to use distributed computing engines, cloud-native databases, and data warehouses when you want to process and analyze your data in data lakes. Amazon EMR and AWS Glue are two key services you can use for such use cases. Amazon EMR is a managed big data framework that supports several different applications, including Apache Spark, Apache Hive, Presto, Trino, and Apache HBase. AWS Glue Spark jobs run on top of Apache Spark, and distribute data processing workloads in parallel to perform extract, transform, and load (ETL) jobs to enrich, denormalize, mask, and tokenize data on a massive scale.

End Of Day 41

Thanks for checking out our community. We put out 3-4 Newsletters a week discussing data, the modern data stack, tech, and start-ups.

If you want to learn more, then sign up today. Feel free to sign up for no cost to keep getting these newsletters.

Incorporate data reliability early +100